Robotics

Estevan Silveira @ Envisioning

The history of robotics is intertwined with the history of automation. Aristotle (384-322 BCE), in his Physics, dreamed of tools that could, when ordered by humans, work on their own, as if "looms were to weave by themselves." A longing that definitely became reality centuries later with the rise of industrial automation processes and, in more recent times, thanks to robotics, fully operational automatons. Robotics is an interdisciplinary domain detached from computer science and mechanical engineering centered on the building of machines ―hereinafter called robots― that can replicate or even replace human actions.

The world of scientific fantasies are abuzz with robots overtaking humans or, alas!, their jobs. Before robotic takeover strikes, let us look at this in a reflective way. 'Taylor' is the problem, not 'Ford'. That is, we should be more concerned with robots occupying the placements of our bosses, not the shop-floor: goods-to-person and collaborative picking robots are welcome, but there is something fishy about thorough managerial automation, the hyper-rationalization of command and control. Think about it for a second: how can something as subjective as leadership be automated?

Internet Robots

Eliza, the mother of all chatbots, or conversational agents, was created by Joseph Weizenbaum at MIT in 1966. Her job was to establish convincing dialogues with humans, to the point that they could not determine whether she had robotic origins, something like the Voight-Kampff test from the movie Blade Runner (1982), designed to distinguish androids from humans. When that happens,

regardless of the outcome, you might have to realize that robots are hardware plus software. Bots are algorithmic robots coded to automate conversation.

We can say that, up to a point, chatbots are a combination of social robotics, a term usually associated with Robot Caregivers, and service robotics, a term usually associated with housekeeper robots. More precisely, chabots can be one of the touchpoints that are bundled as part of a customer journey, in accordance with a service design or omnichannel strategy. Maybe you would like to merge usual chat programs with GPT-3, a new AI language model that employs big deep neural networks. But when it comes to semantics, robots begin to create authoritative statements that are completely false. Chatbots, even if AI-powered, have hard times reading between the lines.

Robotics Education Ecosystems

Some years from now, wherever your eyes land, you will always find one of them. Welcome to Robotland. Fully autonomous, or 'self-driving' electric vehicles, run on roads able to charge batteries on the fly through Inductive Transpor Charging. Boston Dynamics' fleet, formerly frightening, now gently co-work with humans in warehouses to retrieve goods. Kuka's industrial robots have left behind doing only welding-type tasks and began to perfect themselves to 4D printing active origami. And so forth.

Today, things are more subtle. As imagination retreats, other realities appear to be so much more urgent, bringing our feet back on the ground, as the use of robots in education. To spice the robotic pull force exercised on children, learning model policies should add an 'a' to the STEM acronym. So, Science, Technology, Engineering and Math becomes STEAM, or Science, Technology, Engineering, Arts and Math. It is worth noting that some visionaries are building a robotics education ecosystem across Africa, combining video game characters, robotics and AR. Presumably, the Global South has a plan to leapfrog the North.

What Lies Ahead

Soft Robotics

The so called end-effectors have been seen as ripe for innovation. Those are devices that attach to a robot's wrist, allowing it to pick up and place materials of various shapes, sizes, and textures. Eventually, they may forgo solid things on behalf of more delicate or biological materials. There's so much development going on in soft tissue surgical robots, flexible manufacturing in the food industry, and, laying grippers aside for a while, deep-sea exploration. Speaking of the sea, think of robots made of elastomeric polymers, as Robert F. Shepherd's multigait soft robot, inspired by marine animals that do not have hard internal skeletons. Look now to the future, in which nature-restoring biobots, in the form of aquatic plants, clean unwanted algae off dead coral, produce medicine seed pods for fishes and absorb excess carbon dioxide, helping relieve ocean acidification. In the matter of climate change remediation, soft is better than hard.

Swarm Robotics

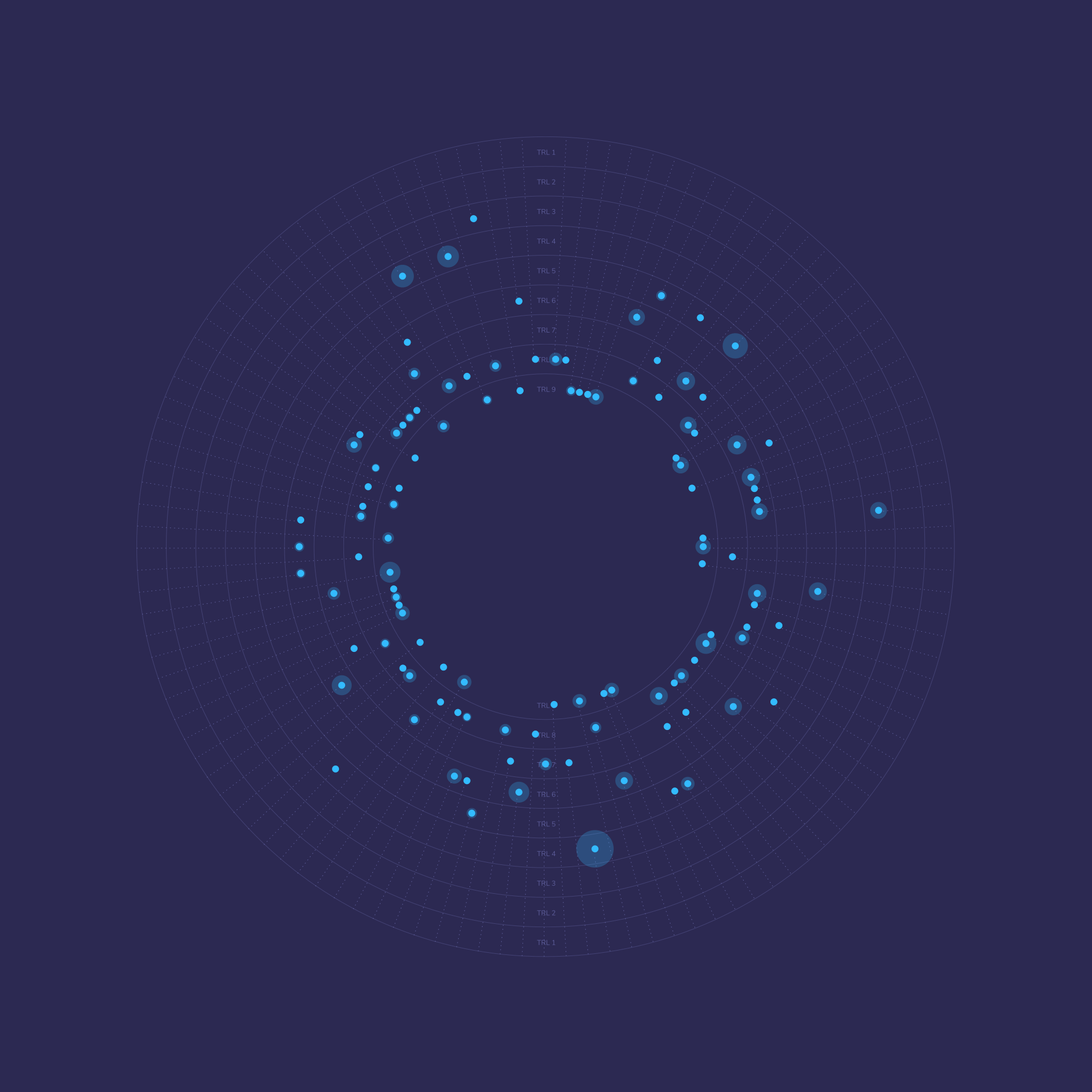

The next step for centralized multi-robot systems is the development of robot swarms, that is, decentralized units souped-up by sensing autonomy and self-organization. At this stage, we have simultaneous localization and mapping (SLAM) robots, whose main job is exploration and production of accurate maps. In due course, we shall see robotic devices with a certain degree of epistemic autonomy capable of modifying their own internal structure in open-ended ways. Therefore, multi-robot SLAM will not only share in a coordinated way raw and processed data, but will be fitted with evaluative parts that directs the modification of the mechanisms that mediate sensorimotor coordination of each individual.

Methods

This energy transfer mechanism can be applied to roads and highways and is able to wirelessly charge batteries of onboard vehicles by creating an alternating electromagnetic field with an in-vehicle induction coil. By installing primary coil modules within the road surface, a magnetic field is created, which generates an electric current in a secondary coil placed under the vehicle, powering the vehicle's batteries. Some initiatives are studying magnetizable concrete materials to achieve inductive transport charging. This solution functions with parked vehicles using fixed pods or moving vehicles in a ‘dynamic charging’ mechanism through electrified roads. Additionally, wireless charging could become bidirectional: not only from the road to the vehicle but vice versa, by harnessing the energy generated from braking.

A method through which a computer digitizes an image, processes the data and takes some type of automated action. Machine Vision allows systems to understand and interpret the environment using live or recorded images, tag their content, and enable programs to perform automated tasks that previously required human supervision. By using one or more video cameras with analog-to-digital conversion and signal processing, a computer or robot controller receives the image data. This also allows systems to perceive their surroundings beyond regular electromagnetic wavelengths and might include infrared, ultraviolet, or X-ray frequencies to enhance image processing precision.

The process of capturing carbon dioxide directly from ambient air with an engineered, mechanical system. There are two approaches, liquid and solid, to capture CO2 from the air. Liquid systems pass air through chemical solutions, removing the CO2 while returning the rest of the air to the environment. While solid systems make use of solid sorbent filters that chemically bind with CO2. When these filters are heated, they release the concentrated CO2, which can be captured for storage or use. The CO2 that is removed in Direct Air Capture (DAC) can be permanently stored in deep geological formations, or it can be used in food processing, for example, or combined with hydrogen to produce synthetic fuels. When CO2 is geologically stored, it is permanently removed from the atmosphere.

Traditional rainfall monitoring techniques (rain gauge, satellites, radars) can be costly and weather stations are often sparsely located. Commercial Microwave Link (CMLs) can provide near-ground rainfall observations in sparsely gauged regions, complement existing observations in regions covered by conventional monitoring networks, and improve the space–time resolution and accuracy of rainfall products. A CML provides a line of sight radio connection between two locations, and is commonly used to interconnect cellphone base station towers as well as other operators. The technique of rainfall estimation using CMLs is based on the fact that rainfall causes attenuation to the radio signals between transmitter and receiver stations in the network. A measurement of rain-induced attenuation can be used to derive the average rain rate along the path. The CML method enables rain measurements in places that have been hard to access in the past or where rainfall has never been measured before. Furthermore, CMLs' implementation cost is minimal; the network infrastructure is in place and the CML data is already collected and logged by many of the cellular operators for quality of assurance needs. However, there are some limitations with this technology. As data stems from a network operated for a different purpose with no default process to distribute the data, unlocking and acquiring CML data is a challenge. Further, fluctuations in signal levels can also be caused by changes in water vapor content and air temperature, as well as strong solar radiation. Other limitations include the overestimation of rain-induced attenuation due to an assumed baseline determination.

A 3D printing method which uses a focused energy source, such as a later or electron beam or plasma arc to simultaneously melt and deposit a material from a nozzle, mounted on a multi axis arm onto a specified surface where it solidifies to form an object. Material can be deposited onto existing surfaces from any angle. DED is commonly used with metals for repairing and maintaining structural parts, such as fixing turbine blades.

A 3D printing process where tiny particles of plastic, ceramic or glass are fused together by heat from a high-power laser to form a solid, 3D object. The process starts by heating a bin of polymer, typically nylon or polyamide, to just below its melting temperature. A very thin layer of the powdered material is then deposited onto a build platform by a recoating blade. A CO2 laser beam scans the surface, using a pair of galvos to focus and selectively sinter the powder to solidify a cross-section of the object. When the entire cross-section is scanned, the build platform moves down one layer thickness in height. The recoating blade deposits a fresh layer or powder on top of the recently scanned layer, and the laser sinters the next cross-section of the object. This is repeated until the object is fully formed. SLS is typically used for creating functional parts with complex geometries.

A 3D printing material extrusion method where a printer nozzle in the extrusion head deposits filaments, typically thermal plastics. The printer nozzle is heated to the desired temperature to the point the filament is pushed through the heated nozzle, causing it to melt. The printhead moves the extrusion head along X and Y directions above a build platform, depositing melted material onto the build plate according to a specific pattern or model, where it cools down and solidifies. Once a layer is complete, the printhead proceeds to lay down another layer, repeating layers until the object is fully formed. FDM is a popular method for producing custom prototypes.

A 3D inkjet printing process where droplets of a photopolymer, a liquid photoreactive material, are selectively deposited onto a build plate in a line-wise fashion. The inkjet deposits are cured with UV light, and after each layer has been cured, the process can be repeated until the 3D object is complete. One advantage of Material Jetting is that different materials can be printed in the same object, in full color. This method can be used to create support structures from a different material to the object being produced.

A 3D printing process that uses a moving industrial printhead to selectively deposit a liquid binding agent onto a powder bed; a thin layer of powder materials on a build platform. The binding agent bonds layers of powder material such as metal, sand, ceramics or composites to form layers of an object. The layers are built up by lowering the powder bed and repeating until the object is fully formed. Compared to conventional manufacturing, Binder Jetting allows for the creation of complex geometries using a range of materials such as metals, sand, ceramics and polymers.